Can a generalist model learn to specialize?

That's what my classmate Anurag and I wanted to find out when we chose our final project for Computer Vision at GWU. SAM (Meta's Segment Anything Model) is remarkable at what it does. Give it any image, click anywhere, it'll segment something. But "good at everything" often means "not great at anything specific." We wanted to know if we could take that powerful foundation and push it toward tasks where the vanilla model genuinely struggles.

We picked three challenges, each harder than the last. What followed was one failure, one modest success, one breakthrough, and a trip to the Smithsonian Zoo.

Challenge 1: Wood textures, and how we made it 36% worse

We started with wood texture segmentation: teaching SAM to distinguish between different wood grains (oak, walnut, pine) in 2x2 mosaic images. It felt like a reasonable warm-up.

We trained on 500 synthetic mosaics, used Dice loss only, ran 5 epochs. The training loss dropped to 0.0001. We were thrilled.

Then we tested it. On Hard Mode, our "specialized" model scored 59.52% mIoU. The vanilla MobileSAM scored 95.80%.

We made it 36% worse.

Looking back, it was obvious. A training loss of 0.0001 isn't learning, it's memorizing. We'd overfitted catastrophically on 500 samples, used only Dice loss which doesn't penalize noisy boundaries, had no validation set to catch it early, and most critically: we'd tried to improve a model that was already nearly perfect. When your baseline is 95.8%, there's almost no room to go up and a huge amount of room to fall.

That failure taught us more than a success would have. Don't fix what isn't broken. Low loss is a warning, not a reward.

Challenge 2: Carpet defects, synthetic data, and a modest win

The second challenge had a real industrial motivation: automated quality control in textile manufacturing. A carpet production line generates thousands of meters of fabric daily. Humans visually scan for defects and they miss things, especially after hours of inspection. Could a specialized vision model catch what human eyes miss?

The catch: we had only 89 labeled defect images from the MVTec AD dataset. That's nowhere near enough to train a neural network.

So we built a synthetic defect generator. We took 280 defect-free carpet images and programmatically injected three types of anomalies: darkened patches (simulating holes), thin dark lines (simulating thread pulls), and hue-shifted circles (simulating dye bleeding). From those 280 clean images, we generated 500 synthetic training samples.

Applying lessons from the wood failure: no more wild loss drops, no more training without watching the curves. The loss decreased steadily from 0.25 to 0.15 across 5 epochs. Stable, gradual, healthy.

On real defects: 36.18% mIoU, up from the baseline's 29.43%. A 6.75-point improvement on industrial data our model had never seen during training.

Not spectacular. But the direction was right, and we now had a framework that worked.

Challenge 3: Camouflaged animals, where it finally clicked

Evolution is a ruthless optimizer for defeating visual detection. It's one of computer vision's hardest problems for good reason.

Many animals have evolved over millions of years to be visually undetectable, to predators, prey, and humans. COD10K is a dataset of 10,000 images of these animals in the wild, each with a ground truth mask.

We applied every lesson from the previous two experiments. More data: 6,000 training images, augmented to 12,000 with horizontal flips. Bigger model: we upgraded from MobileSAM (40M parameters) to SAM ViT-H, the largest SAM variant at 636M parameters. Combined loss: 50% BCE + 50% Dice loss. BCE forces pixel-perfect boundaries; Dice maintains shape coherence. Multi-prompt training: we randomly alternated between center-point prompts and bounding box prompts, so the model couldn't rely on memorizing a prompt pattern. It had to actually understand where the animal was.

The training curves were everything we wanted: steady, gradual improvement over 7 epochs, loss dropping from 0.142 to 0.106 with no spikes, no collapses.

Results:

- Base SAM ViT-H: 47.52% mIoU

- Our specialized model: 66.35% mIoU

- +18.83 percentage points. A 39.6% relative improvement.

That gap is the difference between a model that's barely better than chance on this task and one that's genuinely useful.

The zoo visit

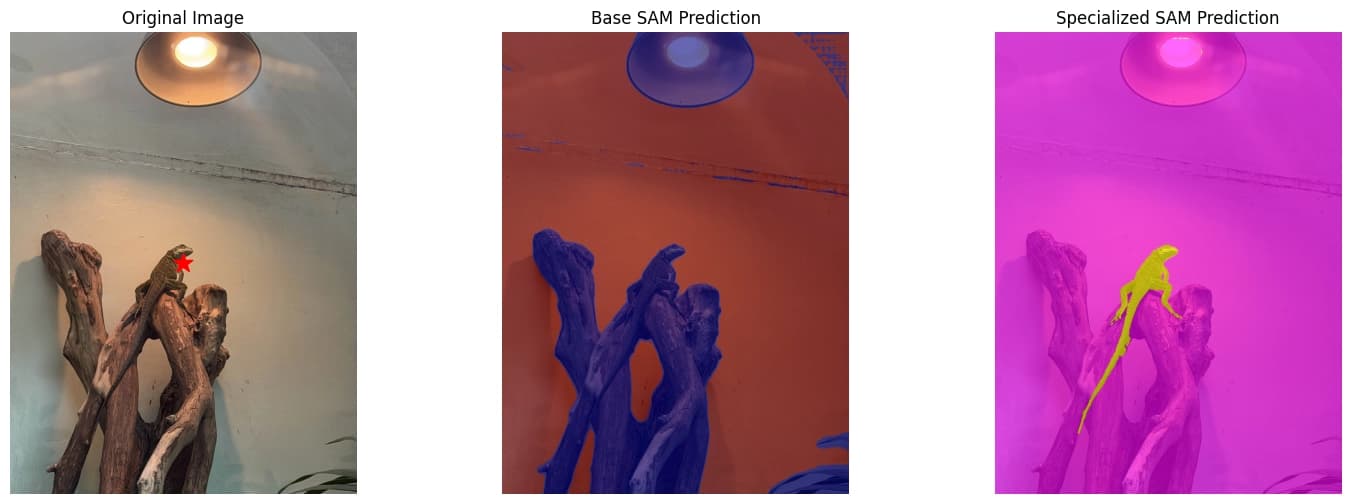

We didn't stop at the benchmark. We took the model to the Smithsonian National Zoo and photographed real animals in their enclosures, completely out-of-distribution from anything in our training set.

It held up. Not perfectly, but meaningfully. Seeing your model correctly segment a camouflaged animal in a real environment you photographed yourself is a different kind of validation than a test set number. It tells you the model learned something real.

What all three experiments showed, side by side

| Experiment | Baseline mIoU | Specialized mIoU | Change |

|---|---|---|---|

| Wood textures | 95.80% | 59.52% | -36.28% |

| Carpet defects | 29.43% | 36.18% | +6.75% |

| Camouflaged animals | 47.52% | 66.35% | +18.83% |

The single best predictor of whether specialization works isn't the model, the loss function, or the data volume. It's the baseline. How much is the model already struggling? Wood at 95.8% had nowhere to go but down. Camouflage at 47.5% had real room to improve.

Fine-tuning a model that's already excellent on your task is not a research project. It's just risk.

Fail, understand, apply, succeed

You learn more from a well-documented failure than from an undocumented success. The wood experiment failed, but because we understood exactly why, every subsequent experiment was better for it. The carpet experiment succeeded modestly, but because we understood its limits, we knew what to change. By the time we reached camouflage, we weren't guessing anymore.

That progression (fail, understand, apply, succeed) is what doing research actually looks like.